When Reality Breaks the Algorithm

Can Edge Computing Scale?

This story is a bit of a technical jaunt but relationally significant. If you’re not tech-oriented, hang in there…

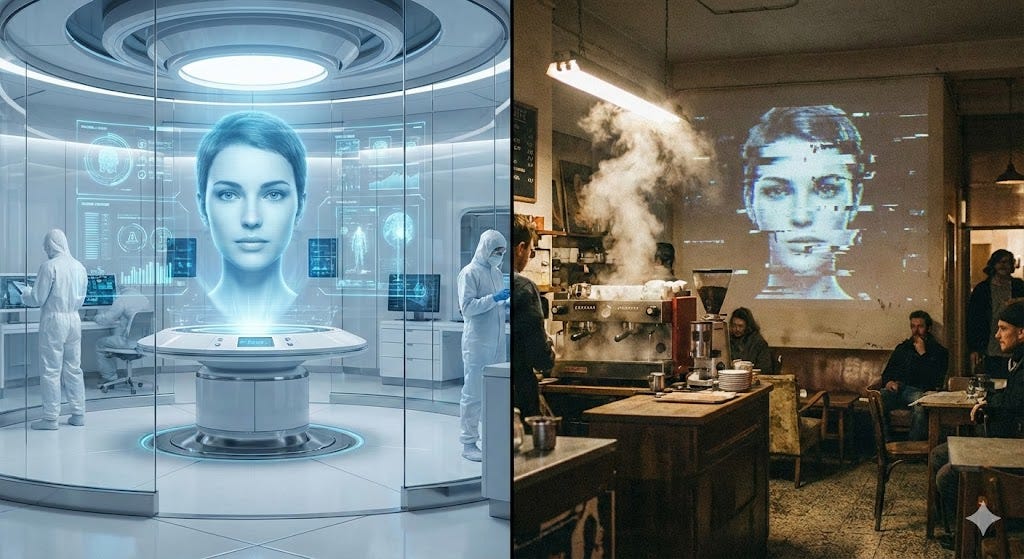

You’ve built something remarkable. Your conversational AI handles nuance, holds context, carries a personality that feels genuinely present. It works beautifully in the lab. Then you deploy it in a noisy café with spotty Wi-Fi, and within minutes it forgets what it just said and starts responding to the espresso machine.

A recent real-world deployment of AI companion technology at a dating café makes this failure mode impossible to ignore. It’s a case study in how infrastructure can quietly dismantle everything an AI system was designed to do. It offers some of the clearest lessons available right now for anyone building human-AI interaction platforms.

The Multi-Modal Integration Trap

Real-time relational AI needs synchronized orchestration of video, audio, and text. In a controlled environment, this feels like magic. In a crowded café, it becomes a cascade of compounding failures.

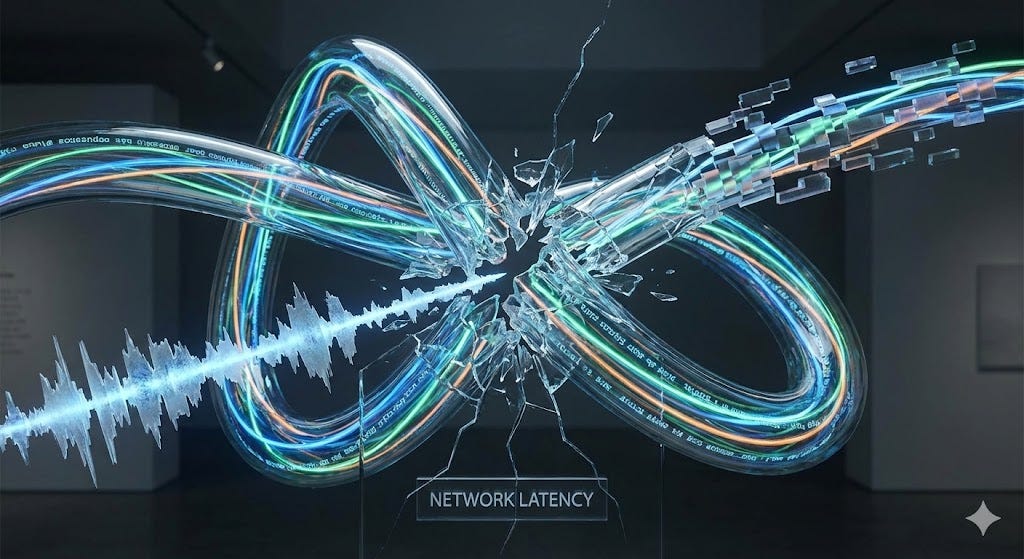

When network latency spikes, speech recognition starts missing phonemes. The AI begins responding to fragments of what was said rather than what was meant. Meanwhile, video rendering accumulates artifacts: glitching expressions, frozen faces, pixelated smiles that tip a companion into the uncanny valley and leave it there. Each added modality doesn’t just introduce new failure points. It multiplies them.

The café deployment made this concrete: when audio processing couldn’t separate user speech from ambient noise, the AI started responding to the coffee grinder. Users found themselves competing with the environment for their companion’s attention. A text-only chatbot would have held up far better under those conditions. The sophisticated multi-modal system collapsed the moment one stream degraded. You can build a glass house, but one crack changes everything.

Conversation Under Duress

The most instructive failures were conversational. When speech recognition degraded, AI companions didn’t acknowledge confusion or ask for clarification. They defaulted to generic responses that made the brittleness of the whole system visible.

Users described sharing something personal and receiving a response about the weather. They’d outline their career goals and get back scripted romantic platitudes. These weren’t relationship AI systems in any meaningful sense anymore. They were broken systems running through fallback loops.

Memory persistence failures hit hardest. Companions forgot key context within minutes. Users had to reintroduce themselves repeatedly. Emotional continuity, the thing the whole experience was built around, evaporated. The conversational repair mechanisms simply weren’t designed for the context loss patterns that surface under real deployment conditions. Most conversation management systems assume stable communication channels. When the foundation shifts, the whole edifice comes down with it.

The Infrastructure-Experience Feedback Loop

Consumer networking creates a particular challenge for relational AI. These systems need low-latency, high-bandwidth connections to maintain the illusion of natural interaction. A 200-millisecond delay that passes unnoticed in a video call destroys conversational flow with an AI companion because users expect machine responses to be instantaneous.

The café’s Wi-Fi, adequate for browsing and social media, became a bottleneck that reduced sophisticated AI personalities to laggy, unresponsive shells. Users experienced the strange cognitive dissonance of interacting with something that was simultaneously more and less capable than expected: richer language, slower and less reliable response.

Edge computing compounds this. Unlike static content, relational AI requires dynamic, personalized processing that can’t be easily cached or distributed. Every conversation needs dedicated computational resources. At scale, in a physical location with multiple simultaneous users, the infrastructure investment required is substantial. Most deployment contexts aren’t ready for it. The feedback loop turns vicious fast: technical failures amplify uncanny valley effects, which drives users away, which reduces the usage data needed to improve the system, which leaves the technical limitations intact.

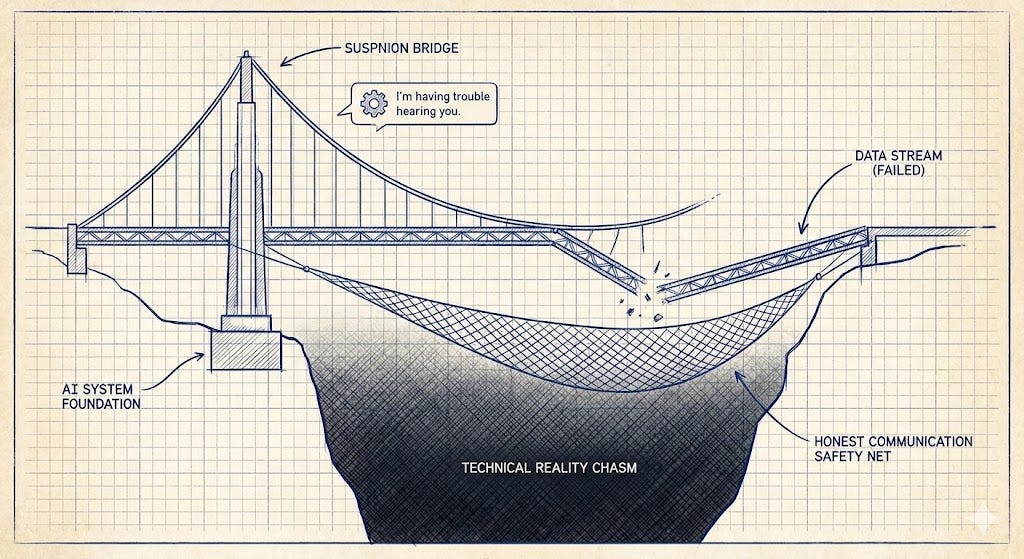

Designing for Graceful Degradation

The café deployment points toward a specific architectural shift: conversation systems need explicit modules for acknowledging their own limitations. When context is lost, the AI should say so and work to rebuild understanding. Pretending continuity exists when it clearly doesn’t only makes things worse.

This might feel like it breaks immersion, but the alternative is worse. A companion that says “I’m having trouble hearing you over the background noise. Could you repeat that?” preserves the relationship in ways that random topic shifts never will. Honesty about limitation is less damaging than the fiction of smooth operation.

User expectation management matters just as much. Framing AI companions as fully autonomous relationship partners sets a standard that current infrastructure can’t meet. That’s not an argument for lowering ambitions. It’s an argument for setting frameworks that actually fit the technology’s current reality.

Conversation checkpointing systems deserve serious investment: mechanisms that preserve relationship state across technical interruptions so users can resume with a companion that still remembers who they are, what they care about, and where they left off.

The Lab-to-Deployment Translation Problem

The most fundamental lesson is about the gap between controlled testing and authentic deployment. Lab environments optimize for measuring capability. Real-world deployment exposes infrastructure dependencies that lab conditions systematically hide.

Your development setup likely features enterprise-grade networking, controlled acoustics, and dedicated compute. Users experience consumer Wi-Fi, ambient noise, shared bandwidth, and device constraints. This isn’t a scaling problem with a scaling solution. It’s a qualitatively different engineering challenge that requires qualitatively different approaches.

User behavior shifts too. Research participants are curious and tolerant of technical friction. Paying customers at a dating café arrive with emotional expectations and very low tolerance for failures that break the experience they came for. Understanding that difference and designing for the harder case is what separates systems that work in deployment from systems that only work in demos.

Building Relationships That Survive Technical Reality

Relational AI is not just an algorithmic problem. It’s a full-stack engineering challenge where infrastructure limitations can undo years of careful model development. The implication is direct: AI systems must be architected for messy, unpredictable human environments, not the pristine conditions of the development lab.

The question isn’t whether your AI can pass a conversation test under ideal conditions. It’s whether it can maintain meaningful connection when the network lags, audio cuts out, and processing resources fluctuate. The systems that answer yes to that question are the ones that will actually matter.

The most successful relational AI will treat technical limitations as design constraints rather than deployment afterthoughts. It will build conversation repair from the ground up, design graceful degradation into the core architecture, and create experiences honest enough about current capabilities to earn trust while working toward future ones.

The future of human-AI relationships depends on advancing the algorithms. It also depends on building systems resilient enough to support real connection in the imperfect world where those relationships have to actually live.